A low/no code future

Are we almost there?

In an era where technologies like ChatGPT, Tabnine, and GitHub Copilot are gaining increasing adoption, discussions about the potential of low/no code workflows have reignited. It doesn’t take any stretch of imagination to extrapolate what the performance of these tools could be over the next 5, 10 or even 15 years. With a couple more GPU generations and some optimization to the models themselves, we could see open source models with the power of GPT3.5 running entirely locally on GPUs that don’t cost $1600 a pop. Some people optimistically believe this will lead to a no code future, where coding is no longer the exclusive domain of developers. In their mind, there are several compelling reasons why this shift could be advantageous

Democratization of Application Development:

Enables non-developers to create applications, allowing for more people to turn their ideas into tangible software without extensive technical know how. This can lead to greater innovation as diverse groups of people have the tools to solve problems and create value.

Increased Development Speed:

Reduces the time taken to develop and launch applications. In a business context, this means faster time to market, allowing companies to adapt quickly to market changes or capitalize on new opportunities.

Cost Savings:

Reducing the reliance on specialized development resources can lead to cost savings. Businesses can avoid or reduce expenses associated with hiring, training, and maintaining a large developer workforce.

Now whether or not these are all strictly good things is up for debate. I’m skeptical that we will ever reach the no code era, which I define as an the era where the majority of people are learning no code tools instead of traditional programming, and significant work is being done with them. But while I may be bearish on no code, I firmly believe we are already in the low code era. I’ve been doing a lot of machine learning recently, and I’m astonished by just how much work I can get done with numpy, pandas, scikit-learn, and matplotlib. With just those 4 libraries I can do everything from linear regression to neural networks and almost everything in between. What is amusing to me is that in just 50 lines of code, I can have a working machine learning model and some graphs. But while I may execute a script or Jupyter notebook with 50 lines of handwritten code, the code that ends up running is usually thousands of line of code long.

If we conservatively estimate that my code calls upon 2000 lines of external library code, my actual contribution accounts for a mere 2.5% of the executed code. In reality, my share might be even smaller. While I appreciate not having to start from scratch each time I tackle a problem, the truth is I wouldn't even know where to begin without these libraries. I don’t know how to implement most of the algorithms I use on a day to day basis. I have no idea how to implement a cross platform graphing library that can do 2d and 3d graphs. I don’t even know how to write the C code that Python ultimately calls to utilizes SIMD and make my vectors go fast.

If I was using another programming language that didn’t already have these useful libraries, I’d be dead in the water. In fact, this chicken and egg problem between useful libraries and starting from scratch, is one of the first major hurdles a programming language has to overcome before it can reach mainstream adoption.

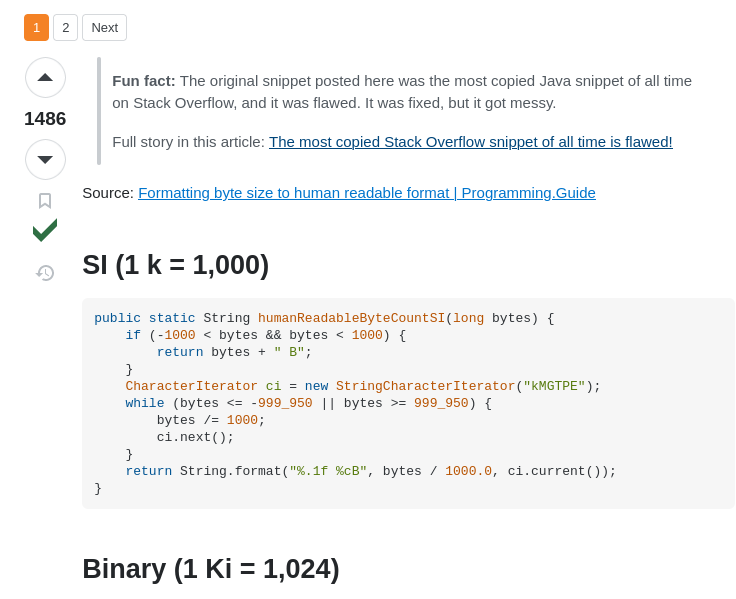

So while my initial thought whenever I hear people talking about no code solutions is to scoff, a part of me wonders how much of a leap no code is from what I’m doing right now. Lots of people just copy code from Stack Overflow already, bugs and all, so you could say that some people are living in the no code era already 🥲

Let’s look at a few arguments against no code solutions. Writing secure robust software is important if you want your code to be correct and present the least amount of attack vectors for hacking. It can be argued that no code tools, while helpful for democratizing the development process, can lead to more bugs as the individuals using them do not have the foundational knowledge necessary to architect secure and robust systems.

But it’s not like the way we code now is any different. In traditional coding, vulnerabilities happen all the time due to poor coding practices, lack of updates, third-party libraries, or just the sheer complexity of systems. Plus not all developers are security experts, so they might program something that seems robust and is full of security vulnerabilities.

Another point of contention is performance. No code tools are optimized for ease of use not speed. Since each action in these tools usually compile down to hundreds or even thousands of assembly instructions, there is usually a lot of inefficiency built in. Even the interfaces themselves are slow, as dragging and dropping visual blocks is a lot slower than banging out code at 80wpm. But then again, if Casey Muratori’s Performance-Aware Programming Series (which is great and definitely worth subscribing to) is anything to go by, we are already writing software that is 100-1000x slower than it should be. He has demonstrated this on multiple occasions, and has even shown how you can get within spitting distance of C in Python, so using a gced langauge isn’t an excuse.

Then there is scalability. No code solutions tend to be very verbose as they abstract away a lot of the logic of the underlying code and hardware. Solutions that only require a few lines of traditional code become a twisted maze of visual blocks that look like actual spaghetti. This not only causes issues in the performance and memory department, but reduces the amount of information you get per screen, making it difficult to navigate. The layers of abstraction and verbosity make the challenge of writing larger programs explode with complexity. But while not as bad in traditional programming languages, scaling in them isn’t perfect either.

For example, Python my native language is my least favorite language to write anything longer than a script in. And it’s ease of use causes people to perform heinous programming sins. I’ve seen people write code in one python notebook that calls another notebook with a single function, because we all seem to have forgotten how to write a python file that doesn’t end in .ipynb. In the web domain, I constantly see older web developers complain that new developers don’t understand how to do basic web tasks without a framework. Think about that for a second. JavaScript is so abstracted that new developers don’t even understandvanilla JavaScript.

Picking on JavaScript some more, GitHub has mentioned how the average JavaScript project has 712 indirect dependencies, and 30 direct dependencies. While the other languages have less, none have less than 12 direct dependencies, including Rust, which typically is used in low level programming scenarios.

My point is, we laugh and deride no code solutions for the problems that would arise when using them. But many of the problems are the same problems we struggle to address with traditional coding right now. There is an argument to be made that traditional programming have gotten worse over the years and that, that hasn’t stopped people from continuing to write software anyway. So if everyone started using no code tools today it would actually just be more of the same. As someone who knows how to code “the traditional way”, when I need a Docker container to get a program to run reliably, it just makes me feel like we’ve gone too far.

There is a quote the famous game programmer John Carmack that I feel is related to this.

Software is just a tool to help accomplish something for people — many programmers never understood that. Keep your eyes on the delivered value, and don’t overfocus on the specifics of the tools

— John Carmack

I struggle with this. Sometimes I get wrapped up in the specifics. I spend too much time deciding what stack everything is going to be in, instead of just keeping my eye on the whole point of the project. If no code solutions take off, I’ll give them a try. And if they help us achieve the ultimate goal of delivering value, whether it be to ourselves and our own personal satisfaction, or too others, than that is ultimately what we want right?

Call To Action 📣

If you made it this far thanks for reading! If you are new welcome! I like to talk about technology, niche programming languages, AI, and low-level coding. I’ve recently started a Twitter and would love for you to check it out. I also have a Mastodon if that is more your jam. If you liked the article, consider liking and subscribing. And if you haven’t why not check out another article of mine! Thank you for your valuable time.